Getty Images offers ‘commercially safe’ generative AI tool

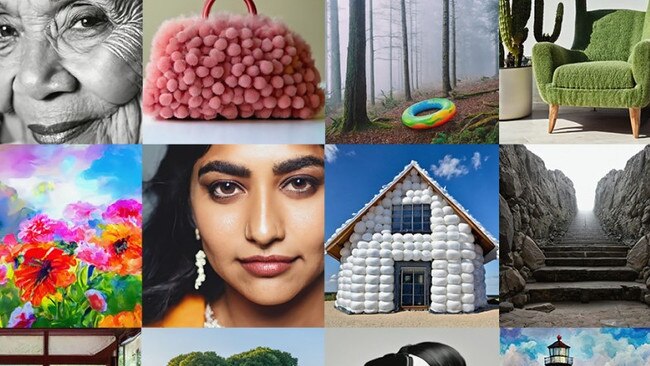

Getty Images is the latest to join a growing list of platforms developing generative AI images, and grappling with the social issues around them.

Visual media content company Getty Images is the latest platform to release its own generative AI tool.

Getty Images chief executive Craig Peters told The Australian the tool is “commercially safe” and has been several years in the making. It uses AI software company Nvidia-owned text-to-image technology called Edify to produce content.

The tool is trained exclusively on Getty Images’ creative library, the images of which have full indemnification for commercial use.

Content generated via the tool will not be fed back into the Getty Images and iStock content libraries for others to licence. Contributors will also be compensated for inclusion of their content in the training set with a share of revenue.

It comes as the development of generative AI tools gains momentum since OpenAI made its text-generating AI tool ChatGPT available to the public nearly a year ago. It also comes as concerns grow around intellectual property risks for content creators and owners, as some AI platforms train their models on information harvested from the internet at large.

“We have four things that we try to do as a business. We try to help our customers create at a higher level, save time, save money, and we eliminate their IP risk,” Mr Peters said.

Getty joins a roll call of companies, particularly those in the business of making software, that have integrated content-producing tools into their systems throughout the year. Microsoft, Adobe and graphic design platform Canva are all among the growing list.

Mr Peters said the tool responds to customer demand. While scale is a byproduct, the idea is not to provide a larger volume of content, but to enhance the quality of imagery that can be used on media channels, such as marketing and advertising campaigns and collateral.

Marketing is one of the top functions in which AI is being used by companies. According to a recent global Bain & Co survey of nearly 600 companies across 11 industries, 39 per cent of respondents said they were using or considering using generative AI to speed up the development of marketing materials.

Recently, the proliferation of AI has seen the rise of “deepfake” images that bear increasingly accurate likeness to “authentic” photographs of real events and people, causing confusion among audiences.

Ahead of Donald Trump’s April 2023 arraignment in the US, a series of fake, AI-generated images went viral online, showing the former president running and resisting arrest from authorities.

In March, a similarly unusual image of Pope Francis appeared online wearing an oversized white puffer jacket styled in the fashion of luxury brand, Balenciaga. The image was created by a generative AI platform called Midjourney, which uses AI to produce image-based content.

Mr Peters thinks that consumer trust is being eroded by content that is not “authentic”. This type of content is concerning, according to Mr Peters.

“I believe fundamentally trust has been eroded through not just these technologies, but (what) these technologies can do at scale, and that can combine over social media to do it at speed and reach. And I worry about those things.”

Mr Peters added that companies should disclose to customers imagery or content generated by AI. The same applies to other AI tools, such as chatbots.

The generative AI industry is currently largely unregulated and lacks transparency requirements for businesses. The EU, however, has recently proposed legislation that would require companies to disclose if its content is generated by AI.

“If I’m talking to a chatbot, I should know whether that’s a chatbot, or in person. Passing one off as the other is not something I think we should be doing. I think transparency ultimately leads to trust,” Mr Peters said.

“I’m not saying a chatbot is a bad thing to implement. I’m not saying generative AI is not something you should use in your business. Obviously, we’re providing a service that allows you to do just that.

“But I think you should have a level of transparency. I think that will accrue to the brands that use this.”

Mr Peters said images that resemble a specific branded product or even a US president, for example, is not something users can expect from Getty Images generative AI tool.

“It doesn’t know what Nike is. It doesn’t know who Joe Biden is. It doesn’t know what the Pentagon is,” Mr Peters said.

This is because Getty’s tool doesn’t harvest data outside of its own creative library of imagery.

Getty will also make the tool available to paying content partners. In those instances, businesses can safely train the AI tool on content they own the copyright for. These are among the only instances where an image of a branded sneaker, for example, could be generated – and only if that content partner was that specific brand.

Getty also banned content creators from uploading AI-generated content to its creative library in September 2022, however Mr Peters said that is difficult for the company to police, other than stipulating it as a requirement in contributor agreements. Getty currently works with around 541,000 contributors.

Is there still a place for human-led creativity when it comes to photographic content?

“I think creativity is something that is very much a human endeavour and output,” Mr Peters added.

“This tool allows you to be creative in a way that often a (camera) lens couldn’t, because you were bound to certain physical things or even software would be very time and labour intensive.

“I think it‘s just an additive service to what we are bringing to the table.”