This was published 1 year ago

Opinion

When OpenAI’s CEO was sacked I thought it was corporate trivia. Here’s why it’s not

Sean Kelly

ColumnistIn recent days, there has been utter chaos at perhaps the most important company in the world. Sam Altman, the very rich CEO of OpenAI, was suddenly removed from his post. There were protests and resignations. An interim CEO was appointed. Then the interim CEO was un-appointed, and Altman – just 38 years old – was back.

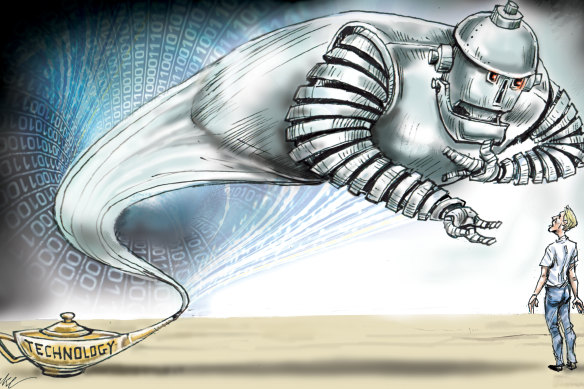

Perhaps this sounds like corporate trivia to you; when Altman was sacked 10 days ago, it did to me too. But in trying to comprehend what went on, and why it mattered, I felt, for the first time, what I suspect will very soon become a common human experience: the fear of the potential of Artificial Intelligence to radically alter our world.

When Altman was sacked, I felt, for the first time, what I suspect will very soon become a common human experience.Credit: Joe Benke

Altman himself is open about the fact that risks exist, but is optimistic. In general, he comes across as sensible and sane. In an interview with Bloomberg’s Emily Chang in June, when asked about the unusual fact that he does not have a financial stake in the $86 billion company (technically, OpenAI is a not-for-profit with a for-profit arm), he said that most people struggled to grasp the concept of “enough money”.

He has, it should be noted, used some of that money to prep for catastrophe. In 2016, before he was heading up an AI project, he listed a pandemic, nuclear war, and AI “that attacks us” as possible disasters. He tried not to think about it too much, “But I have guns, gold, potassium iodide, antibiotics, batteries, water, gas masks from the Israeli Defence Force, and a big patch of land in Big Sur I can fly to.” This fact may affect how seriously you take his professed optimism.

In an interview with The Atlantic earlier this year, he downplayed this prepping as a hobby. But he said something more frightening still: that his company had built artificial intelligence that they would never release – because it was too dangerous.

That fact has re-emerged in recent days as a possible reason he was fired: Reuters reported on Thursday that researchers had written to the board warning them this new AI could threaten humanity. (I’m assuming it was the same AI. If not, the company has two separate AI models considered dangerous. I hope not; one seems like enough.)

This “dangerous AI” was one reason I went from skipping over articles about AI to taking warnings seriously. (And it was while reading that article, by Ross Andersen, that I first felt fear: some of the bits that scared me most are below.)

The second was the dramatic language employed by those in AI (who are working towards Artificial General Intelligence – something closer to human intelligence) even when they’re being positive. Altman told Chang a lot of people spoke about AI as though it was the last technological revolution. “I suspect that from the other side it will look like the first”.

The third is speed. “The main thing that I feel is important about this technology,” Altman said, “is that we are on an exponential curve, and a relatively steep one, and human intuition for exponential curves is like, really bad…”

The fourth was the idea of AI machines working together. Another OpenAI board member (replaced after the Altman debacle) told The Atlantic of watching AI bots play a video game: completing complex tasks together, seeming to communicate by “telepathy”. At scale, this could see a single AI organisation as powerful as 50 Googles: “unbelievably disruptive power”.

The fifth is the ability of AI to deceive us – more sinister still, to develop the intention to deceive us. Several AIs have already learnt to lie to win games or complete tasks efficiently.

A technology progressing too fast for us to grasp, making changes so large they will reshape the world, with the power to collaborate instantaneously as it deceives us about the fact that they are doing so – and we know its creators consider at least one too dangerous to release.

For some, these are existential factors: they believe AI may destroy us. I don’t think you have to go that far: for me, the idea there is a gaping, incomprehensible chasm between the present and the near future is confronting enough.

These are three major political challenges too. Altman told The Atlantic he meets people everywhere worried that some will become very rich, and most very poor. As Peter Hartcher wrote earlier this year, it seems very likely that many, many white-collar jobs will go – again, at unprecedented speed and scale.

Now – the third challenge – imagine the anger at these events magnified and manipulated for political purposes by powerful AI. Historian Timothy Snyder has written of the way that, in the 2016 US presidential election, Russian bots played a role that few at the time had any awareness of. He has described the way the internet – unlike older forms of propaganda – can seem unmediated, the message “inside us without our noticing”. This lack of awareness can then persist afterwards, as people convince themselves they voted independently. AI bots will be more effective still.

You don’t need any of these, though, to get to a grim place. All you need is to think of the impact of tech in recent years: plenty of advantages, and huge, world-changing problems: disinformation, polarisation, insecure workers, atomisation, depression, Trump. Whatever problems big tech introduces, it introduces at scale, too fast for us to act.

Now consider the fact that, when talking about AI, problems of this magnitude are at the bottom of the list of things to worry about.

It’s not all bad. Altman thinks AI will “end poverty”, dramatically improve healthcare everywhere, improve education. But the potential downsides are very large. I’ve stopped skipping over news about AI, and I think you should too.

Sean Kelly is author of The Game: A Portrait of Scott Morrison, a regular columnist and a former adviser to Julia Gillard and Kevin Rudd.

The Opinion newsletter is a weekly wrap of views that will challenge, champion and inform your own. Sign up here.