Explainer

- Explainer

- Robots

‘Die as a human or live forever as a cyborg’: Will robots rule the world?

Will nanobots and brain chips help us thrive – or could cyborgs wipe us out? In a series exploring the science behind science fiction, we consider artificial intelligence and our future with machines.

By Sherryn Groch and Tim Biggs

In movies, they’re the bad guys – killer cyborgs with bones of steel and lightning-fast reflexes, perhaps an Austrian accent too. But Peter Scott-Morgan has never been afraid of robots. As a scientist and roboticist by trade, he spent decades researching how artificial intelligence (AI) might transform our lives.

Then, in 2017, Dr Scott-Morgan was diagnosed with motor neurone disease, the same paralysing condition that killed Stephen Hawking. Months after puzzling over his “wonky foot” falling asleep, he was told he had two years to live.

He had other ideas. To survive, he would turn to the technology he had spent his career researching. He would become the cyborg. Scott-Morgan has now had two major surgeries to help keep himself alive with robotics – machine “upgrades” that breathe for him, help him speak, and hopefully will even see him stand again as the advancing paralysis traps him inside his body. He plans to merge his brain with AI eventually too, so he can speak with his thoughts rather than the flicker of his eyes. “And I’m OK with giving up some control to the AI to stay me,” he says. “Though that might change what it means to be human ... There’s a long tradition of scientists experimenting on themselves. But die as a human or live as a cyborg? To me, it’s a no-brainer.”

But what about the rest of us? Is humanity destined to merge with machine? We keep hearing that the robots are coming to take our jobs, how likely are they to stage a coup? And why are Facebook and Elon Musk already building machines to read our thoughts?

Credit: Illustration: Matt Davidson

How do machines ‘think’?

A century ago, a Spanish scientist mapped the human brain and uncovered a hidden kingdom. As microscopes began to peer deeper into that mass of little grey cells, Santiago Cajal lay bare the wiring within, so dense he called it a jungle. It is from his detailed drawings that the world understood neurons for the first time and how they exchange information in a tangled network, giving rise to the senses, the emotions and possibly even consciousness itself.

Decades later, a philosopher and a young, homeless mathematician wondered if that network could be broken down into the most fundamental binary of logic: true or false. Neurons could, after all, be considered on or off, firing a signal or not. This theory, by Warren McCulloch and Walter Pitts at the University of Chicago, proved to be an incomplete model for the human brain, too simple to capture all the strange magic really going on inside. But it did give rise to the binary code of computers – those ones and zeroes now form infinite variations of on or off to tell machines what to do. Scientists have been trying to bring computers closer to human brains, at least in function, ever since.

Because machines interpret the world through this binary code, and algorithms (rules made from that code), they are good at a lot of specific things we find difficult, such as solving complex equations fast (and playing chess better than a grandmaster). Yet they often struggle with the mundane things we, with our more complex, adaptable thinking centres, find easy: recognising facial expressions, making small talk and, most of all, improvising.

To overcome this, machine learning models seek to “train” computers to categorise and then react to things themselves rather than waiting on human programming. Over the past decade, one such model known as deep learning has charged beyond the rest, fuelling an AI boom. It’s why your iPhone can recognise your face and Alexa understands you when you ask her to switch on the lights. And deep learning did it by going back to Cajal’s neural jungle. The learning is said to be “deep” because a machine is trained to classify patterns by filtering incoming information through layers of interconnected neuron-like “nodes”.

“I’m sorry, Dave, I’m afraid I can’t do that.” In the 1968 sci-fi classic 2001: A Space Odyssey, a computer called HAL (Heuristically programmed ALgorithmic) takes over a spaceship.Credit: Fair Use

While these artificial networks take a staggering amount of data to train compared to a human brain, experts such as Scott-Morgan hope they will only get better and more efficient as computing power increases (it is roughly doubling every two years). Already, AI can translate speech, trade stock, and perform surgery (under supervision). Since his own surgical journey was documented in the British documentary Peter: The Human Cyborg, Scott-Morgan has been upgrading to a “very Hollywood cyborg-like interface” that uses AI to track the movement of his eyes across its screen with tiny cameras and then offers up phrases for his robot voice to say – predictive text based on the letters he has spelt out so far.

As UNSW professor of AI Toby Walsh points out, machines are not limited by biological processing speeds the way humans and animals are. But others suspect that the capability of even this kind of AI is about to hit a wall. At the University of Sheffield, computer scientist James Marshall says deep learning networks are still based on a “cartoon of how the [human] brain works”. They are not really making decisions, because they do not understand for themselves what matters and what doesn’t. That means they’re fragile. To tell a picture of a cat from a dog, for example, an AI needs to sift through a huge trove of images. While it might pick up tiny changes that would escape the notice of a human, such as a few pixels out of place, these tiny changes usually don’t matter a lot because we understand the main features that set a cat apart from a dog. “But suddenly you change some pixels and the AI thinks it’s a dog,” Marshall says. “Or if it sees a drawing of a cat or a cat in real life [in 3D] it might have to start from scratch again.”

The tendency of AI, however powerful, to break in unexpected ways is part of the reason those driverless cars we keep being promised are yet to arrive. Machines can be fooled even into seeing things that aren’t really there – driverless cars tricked into accelerating past stop signs when the addition of a few stickers on the sign makes them instead perceive increased speed limits; or facial recognition programs duped into skipping past suspects wearing wigs and glasses.

Any AI network is vulnerable to this kind of manipulation, and if hackers know its weak points they can do more than break it, they can hijack it to perform a new task entirely. Of course, AI can be trained to identify and resist this kind of sabotage too but, at some point, it will encounter a problem it hasn’t prepared for.

Perhaps a little paradoxically, some experts say that a way to give deep learning more common sense is to fuse it with the old, more rigid form of AI that came before it, where machines used hard-coded rules to understand how the world worked. Others say deep learning needs to become more flexible yet, writing its own algorithms and programs to perform new functions as it needs to, even testing its actions in the real world through robotics (or at least very good simulators) to help it understand causality. Amazon’s new line of Alexa assistants look through a camera to better understand the world (and their owners).

“But I don’t think [deep learning] will ever work for driverless cars,” Marshall says. “When you have to build a more and more complicated machine for a fairly simple task, maybe the machine is built wrong.”

Arnold Schwarzenegger (and his iconic Austrian accent) starred as a killer cyborg in The Terminator franchise.Credit:

What if we could model AI on how animals think? Or 3D print a brain?

Marshall is flying a drone around his lab. It’s not bumping into walls, the way drones normally do when trying to distinguish one beige, slab of office wallpaper from another. This drone has a tiny chip in its “brain” holding an algorithm borrowed from a honeybee. It tells it how to navigate the world as the insect does.

At Marshall’s lab in Sheffield, now a company offshoot of his university called Opteran, the team is trying something new – modelling machine thinking on animals. Marshall calls it “natural intelligence”, not artificial intelligence. Autonomy, the kind driverless cars and robot vacuums need to navigate their surrounds, is a solved problem, he says. “It happens all the time in the natural world. We require very little brain power ourselves to drive, most of the time we’re on autopilot.”

Bees have a less formidable number of neurons than humans – about a million, next to tens of billions – and yet they can still perform impressive behaviours: navigating, communicating and problem-solving. Marshall has been mapping their brains, training them to perform tasks such as flying through tunnels and then measuring their neural activity; making silicon models of different regions of their brain according to their function and then converting that into algorithms his machines can follow.

“It’s like a jigsaw puzzle,” Marshall says. “We haven’t mapped it all yet, even those million neurons still interact in really complex ways.”

So far, he has converted into code how bees sense the world and navigate it, and is busy finalising algorithms from the decision-making centre of their brains. Unlike Cajol, he’s not looking to record “all the exquisite detail that keeps the brain alive”. “We just need how it does the function we want. We don’t just reproduce the neurons, we reproduce the computation.”

When he first put his bee navigation algorithm in the drone, he was stunned at how much it improved, changing course as people moved around it, as walls came closer. “That’s when we saw it could work,” he says. “But because everyone is focused on deep learning, we decided to make our own company to scale it up.”

Marshall is also mapping the brains of ants to improve ground-based robots, imagining a world in which autonomous devices are as common as computers, cleaning and improving the world around us. And as machines get smaller – smaller even than the head of a pin or the width of a human hair – scientists hope they may help fight disease in the body too, cleaning blood or killing cancer and infection. Perhaps one day these “nanobots” could even repair the nerves fraying apart in people with motor neurone disease such as Scott-Morgan, or keep humans alive longer.

Marshall hopes to eventually look into the brains of larger animals too, including primates. There scientists might find more complex functions again, beyond just autonomy, and into advanced problem-solving, even moral reasoning. Still, just as Marshall is sure his robot bee is not a real bee, he doubts we’d be able to reproduce an entire human brain in silico and fire it up to see if some kind of consciousness springs to life. “A lot of this research comes out of that very question: could we just replicate the brain somehow, suppose we had a 3D printer,” Marshall says. “But the brain isn’t just its neurons, it’s how it all interacts. And we still don’t understand it yet.”

In his latest book Livewired, US neuroscientist David Eagleman describes in new detail the plasticity of the human brain, where neurons fight for territory “like drug cartels”. There may even be a kind of evolution, a survival of the fittest being waged within our minds day to day, as new neural connections are forged. Quantum scientists, meanwhile, wonder if reactions are happening inside the brain, at its smallest scale, which we cannot even measure. How then could we ever hope to replicate it accurately? Or “upload” someone’s consciousness to a machine (another popular sci-fi plot)?

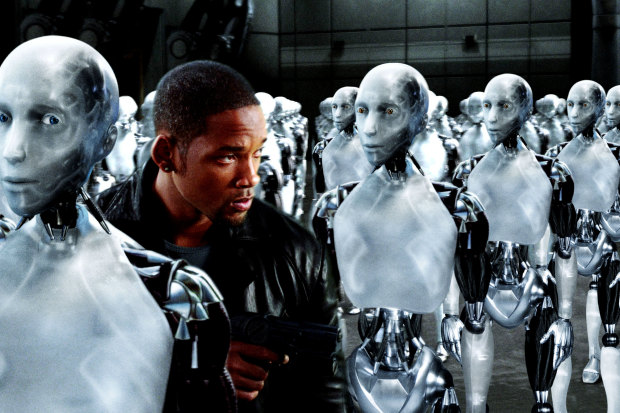

Will Smith battles another pesky AI that thinks it knows best (and a few thousand robots) in the 2004 film I, Robot.

How smart could machines get?

Of all the renderings of AI in science fiction, few occupy the minds of real-world researchers like the singularity – a hypothetical (and some say inevitable) tipping point where machine intelligence growth becomes exponential, out of control. In the 1960s, British mathematician I.J. Good spoke of an “intelligence explosion”, and everyone from Stephen Hawking to Elon Musk has since weighed in.

The theory is that as soon as we have a system as smart as a human, and we allow it to design a system superior to itself, we’ll kick off a domino effect of ever-increasing intelligence that could shift the balance of power on Earth overnight. “Humans, who are limited by slow biological evolution, couldn’t compete, and would be superseded,” Hawking told the BBC in 2014.

And, if AI were ever smart enough to be put in charge and make decisions for us, as is imagined in films such as I, Robot and The Matrix, what if their radical take on efficiency involves enslaving or “powering down” humans (i.e. mass murder)? Remember the glowing red eye of Hal the AI in 2001: A Space Odyssey, who decided the best thing to do, when faced with a crisis far out in space, was to stage a mutiny against his human crew? Musk himself says that, for a powerful AI, wiping out the human race wouldn’t be personal – if we stood in their way, it would be a matter of course, like squishing an ant hill to build a road.

When we refer to “intelligence” in machines, we usually mean we’ve taught a computer to do something that in humans requires intelligence, Walsh says. As of 2021, those smarts are still very narrow – beating a human in a game of chess, for example. AI enthusiasts point to machines helping write music or mimicking the styles of great painters as signs of burgeoning creativity, but such demonstrations still rely on considerable human input, and results are often random or spectacularly bad. The limits of deep learning again mean true spontaneity, originality, is lacking. At IBM, Arvind Krishna imagines you could train an AI on images of what is and isn’t beautiful, good art and bad art, for example, but that would still be training the AI on the creator’s own tastes, not moulding a new artist for the world. Mostly, experts see machines becoming another tool to deepen human creativity and decision-making, revealing patterns and combinations that might have otherwise been missed.

Still, Walsh says there’s no scientific or technical reason why the gap between human and machine intelligence couldn’t close one day. “Every time we thought that we were special, that the sun went around the Earth, that we were different than the apes, we were wrong,” he says. “So to think there’s anything special about our intelligence, anything that we could not create and probably surpass in silicon, I think would be terribly conceited of the human race.”

Indeed, machines have a lot of apparent advantages over us mere flesh bags, as Hawking alluded to. They’re faster thinkers, with bigger, potentially infinite memories; they can network and interface in a way that would be called telepathy if a human could do it. And they’re not limited by their physical bodies.

In Scott-Morgan’s case, transforming into a cyborg has already come with unexpected benefits. He can no longer speak on his own – “I’m answering these questions long after my body has stopped working sufficiently well to keep me alive,” he writes instead – but through his new robot voice, he can communicate in any language. In May, his digital avatar even broke into song during a live interview with broadcaster Stephen Fry. His wheelchair, meanwhile, will soon allow him to stand, so he will tower over his fellow mortals and hopefully, with the aid of an inbuilt AI, it will drive itself wherever Scott-Morgan wishes to go. (“I envision being able to speed through an obstacle course or safely make my way through a showroom of porcelain vases.”)

The hair of his avatar is never out of place “and my powers will double every two years”. “I’ll be a thousand times more powerful by the time I’m 80.” He’s working on programming in a maniacal laugh for his avatar, too.

Of course, because these AI networks are being built by humans, they may inherit the worst of us along with the best. We’ve seen this already on platforms such as Facebook and YouTube where AI used to curate user content has been shown to veer sharply into extremism and misinformation. Or police surveillance networks “learning” their human developers’ cultural prejudices. And, because AIs operate using complex mathematics, they are often themselves a black box, hard to scrutinise. Experts, including the late Hawking, have stressed that regulation and ethical frameworks must catch up fast to the technology, so we can maximise its social good, not just profit margins.

But what we may learn too, is that there’s a ceiling to how intelligent something can be. “The universe is full of fundamental limits,” Walsh says. “It might not be [as simple as] we wake up one day and the computers can program themselves. I suspect that we will get smarter computers, but it will be the old-fashioned way, through our sweat, ingenuity and perseverance.”

While Marshall doubts we’ll ever create a machine that is itself conscious (along the lines of, say, the eloquently self-aware cyborgs in Blade Runner), he is wary of the new push for robots or algorithms that can evolve independently – designed to “breed” the way computer viruses spread now and so rewrite and advance their own programming. “I don’t think that’s the path,” Marshall says. “I think we need to always know what it does, and, if it can evolve on its own, well, life finds a way …”

How can you tell? Cyborgs called “replicants” are much like humans in the 1982 sci-fi film Blade Runner. Credit: Fair Use

Will we use brain chips to merge with machines?

Rather than turning to one all-knowing AI to run the show, many experts think it more likely we will draw on the power of machines to improve our own thinking. If we had a better way to connect with computers, closer than our screens, futurists wonder if we could surf the internet with our minds, back up our memories to the cloud, even “download” ready-formed skills such as a second language or another sense entirely like echolocation or infrared vision.

In 2020, Elon Musk was ruling out none of this when he introduced the world to a pig called Gertrude and the coin-sized computer chip in her brain he hoped would allow people to plug in directly to machines one day. “It’s kind of like a Fitbit in your skull with tiny wires,” Musk said, conceding “this is sounding increasingly like a Black Mirror episode.” In 2021, a monkey with the same chip, made by Musk’s company Neuralink, was shown playing a game of ping-pong using only his mind to control a joystick.

Labs, including military labs, around the world have been developing neural implants for more than a decade, mostly to help people with paralysis operate robotic limbs and those with epilepsy head off seizures. In 2016, an implant connected to a robotic arm even gave back the sensation of touch as well as movement to a man paralysed from the neck down – he used it to fist-bump president Barack Obama.

But this is still new technology, so far involving about 100 electrodes inserted into the brain that read its neural signals and send them wirelessly back to a machine. Neuralink’s prototype has more than a 1000 electrodes, each smaller than a human hair, and grand claims of fast insertion into the skull using a robotic surgery (and no need for even a general anesthetic).

Plunging anything into the brain is risky and can cause damage. But in 2016 two neurologists at the University of Melbourne, Tom Oxley and Nicholas Opie, developed a clever technique to insert an implant without the need for open surgery using, Oxley says, the veins and “blood vessels as the natural highway into the brain”. They’ve just received $52 million in funding from Silicon Valley to run more clinical trials of their own chip, called the Stentrode, in the US. It’s about the size of a paperclip and in Melbourne it’s helped patients with motor neurone disease text, email and bank online by thought alone.

Neuralink’s end goal is to develop a non-invasive headset instead of a chip but for now such external devices pick up a much weaker signal from the brain. Facebook, meanwhile, is looking at wearable wrist devices that would read your mind, literally, where nerves carry messages down to your hands, eventually allowing users to do away with the traditional mouse and keyboard and “type” at a speed of 100 words per minute just by thinking. Like Neuralink, helping patients with paralysis is their first goal, but they also plan to scale up to everyday users. Already, researchers funded by Facebook have managed to “translate” brain waves into speech with an accuracy rate of between 61 and 76 per cent (that beats Google Translate in some cases), using existing electrodes implanted in the brains of patients with epilepsy.

Some of this work being done by Facebook and Musk is “right out on the edge” for enhancement, says the chief executive of Bionics Queensland, Robyn Stokes, but it will likely benefit health applications along the way. Just as brain chips could become digital assistants of the mind, she imagines they could also help manage mental health conditions such as serious depression. “Those sorts of brain computer interfaces are really advancing quickly,” she says, pointing to the Strentrode. She expects an implant that can perform many functions inside the body, beyond reading brainwaves, will soon follow.

Even then, there are still concerns. While the brain’s now-famed plasticity could help it rewire around implants, for example, some experts warn it could also mean it quickly forgets how to perform important functions, if they are taken over by machines. What then if something fails?

Peter Scott-Morgan tries out AI technology that tracks his eye movements to spell out his speech.Credit: Cardiff Productions

Still, enthusiasts, or “transhumanists”, imagine the next stage of human evolution will inevitably be technological – future generations can expect reinforced bones and improved brain power thanks to cybernetic “upgrades”. In British drama Years and Years, a new parental nightmare plays out as a daughter announces she wants to “upload” her mind and live as a machine. (“I don’t want to be flesh. I want to escape this thing and become digital.”)

In his first book on robotics in 1984, long before his disease had emerged, Scott-Morgan himself considered how AI might unlock human potential, and vice versa. “AI on its own is like a brilliant jazz pianist, but without anyone to jam with,” he says now. “It’s nowhere near its full potential.” The duet of human and AI, meanwhile, “would seem close to magic ... a mutually dependent partnership, not a rivalry”. And, to his mind, it could well be the only route that doesn’t lead to a dead end. “I anticipate that otherwise there’ll be a crippling backlash against what’s typically perceived as the ‘uncontrolled rise’ of raw AI”.

Scott-Morgan plans for his eye-controlled communication interface to rely more and more on its underlying AI to generate his speech. That means sometimes “what comes out will not be what biological Peter was planning to say. And I’m very comfortable with that. I keep reassuring [everyone] I have absolutely no qualms about technology potentially making me appear cleverer, or funnier, or simply less forgetful, than I was before.”

Others imagine a greater fusion of robotics, especially nanotech, with animals too. Already parts of nature are being re-engineered as technology in the lab – from viruses repurposed as vaccines and computer chips that mimic the function of human organs to a robot-fish hybrid sent down as a deep-sea probe to collect data beneath the waves. Both the US and Russian armies have kitted out trained dolphins as underwater spies over the years, so perhaps it’s no surprise military researchers have been looking at going further – even putting mind-controlling brain chips into sharks next. And, if bees die out, some experts say cyborg insects may be needed to pollinate plants in their place. All this again raises the strange question of when something is alive, or conscious, and whether we are building better robots – or creating new life entirely.

The Terminator robots have no plans to co-exist to humans. They want the whole planet.Credit: Fair Use

So, about those killer robots...?

Even if we don’t get shark cyborgs, low-cost lethal machines are already changing the face of warfare. Imagine fighter drones talking to one another to find bombing targets, instead of a human pilot back at a base. Or swarms of explosive drones slamming themselves into people and buildings.

These are not visions of the future but news stories from 2020. According to a recent UN report, Turkish drones, packing explosives and facial recognition cameras, were sent out by Libya’s army in 2020 to eliminate rebels via “swarm attack” in Tripoli, without requiring a remote connection between drone and base. They were, effectively, hunting their own targets. And the tech on board was not much more impressive than what you’d find on a smartphone. Meanwhile, the Poseidon is a new class of robotic underwater vehicle that Russia is said to have already made, which can travel undetected and launch cobalt bombs to irradiate entire coastal cities – all unmanned.

Machines that decide to kill like this, based on their sensors and a pre-programmed target profile, are making humanitarian groups increasingly nervous. The International Committee of the Red Cross wants the world’s governments to ban fully autonomous weapons outright. ICRC president Peter Maurer says they will make it difficult for countries to comply with international law, “in effect substituting human decisions about life and death with sensor, software and machine processes”.

Walsh agrees autonomous killer robots raise a host of ethical, legal and technical problems. If things go wrong or they break international law, who is held accountable? Should it be the programmer, the commander or the robot on trial for war crimes? “They’re not sentient, they’re not conscious, they can’t have empathy, they can’t be punished,” Walsh says. “And that takes us to a very, very dark place. It would be terribly destabilising and would change the speed and scale of war.”

Of course, he adds, autonomous systems built for defence, such as the robots used to clear landmines, show that AI can reduce casualties in war too. And computers will continue to come online that can process battlefield data and make recommendations faster than humans ever could. “But [we need] human oversight, human judgment, which is still significantly better than machines, at least today,” Walsh says.

He thinks we should ban lethal autonomous weapons as we have chemical and biological weapons (as well as blinding lasers and cluster munitions), with enforcement powers for the UN to check no rogue state is stepping out of line.

The problem is that such bans rarely happen before things get ugly. For chemical weapons, it took the horrors of the First World War.

“I’m fearful that we won’t have the initiative to do the same here until we’ve seen such weapons being used,” Walsh says. “A swarm of robot drones, hunting down humans and killing them mercilessly. It will look like a Hollywood movie.”

Also in this explainer series ...

- ‘A numbers game’: Will we ever find aliens (and what are UFOs)?

- Curing cancer, designer babies and supersoldiers: How will gene-editing change us?

- Beam me up, Scotty: Will we ever teleport or travel the universe?

- Could we resurrect mammoths, Tassie tigers and dinosaurs?

Let us explain

If you'd like some expert background on an issue or a news event, drop us a line at explainers@smh.com.au or explainers@theage.com.au. Read more explainers here.